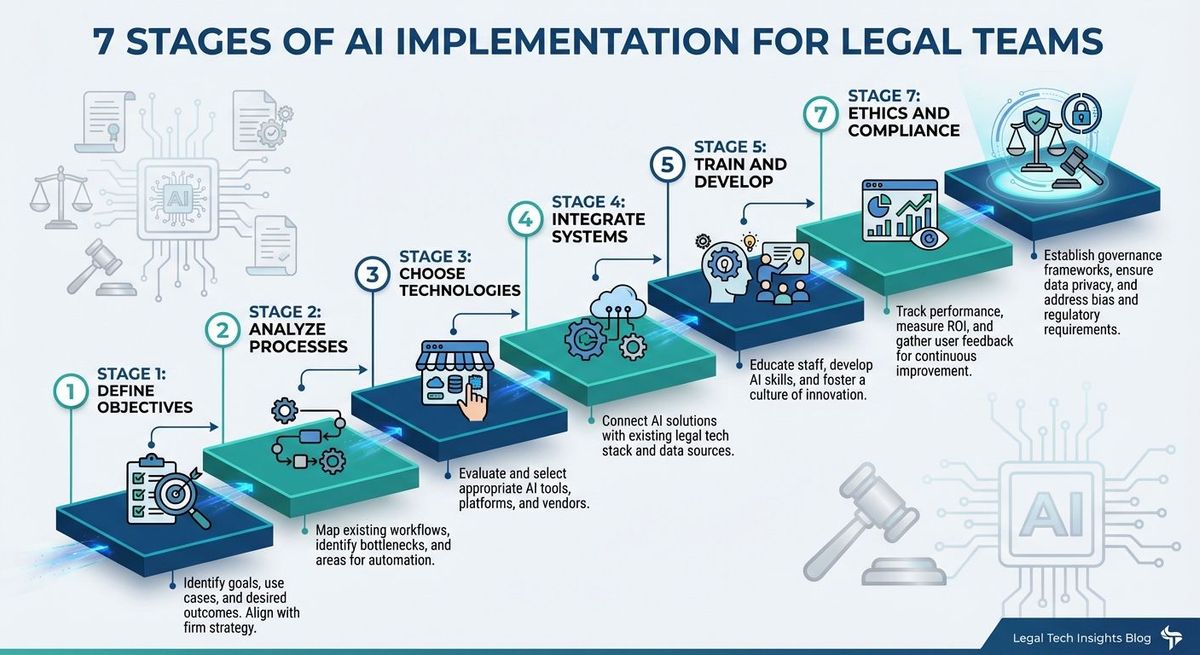

Seven Stages of AI Implementation for Legal Teams Seven Stages of AI Implementation for Legal Teams

Nearly 80 percent of legal departments plan to increase their AI investment this year. Fewer than 15 percent have a governance framework in place. That gap — between enthusiasm and execution — is where most legal AI projects go to die.

In the first two parts of this series, we examined what happens when firms use AI as a blunt cost-cutting instrument and what happens when they use it as a precision tool to amplify their team. This post goes deeper into the how. Over the past two years of building an AI-native practice at FinTech Law, we have refined a seven-stage implementation framework that governs how we evaluate, adopt, and operationalize AI across our legal workflows.

This is not theory. Every stage reflects something we learned — sometimes the hard way — while integrating AI into a working corporate and securities law practice. The framework applies whether you are a six-lawyer firm, a corporate legal department, or a compliance team at a regulated financial institution.

The Framework: Seven Stages

Stage 1: Define Objectives

Start with the problem, not the tool. The most common implementation mistake we see is firms shopping for AI platforms before they have identified which workflows actually need improvement. AI is a solution — but a solution to what, exactly?

The right first question is deceptively simple: where is your team's time going, and how much of that time involves repetitive work that does not require senior judgment? At FinTech Law, we identified first-draft document production, regulatory research compilation, and routine client correspondence as the highest-value automation targets — not because they were easy, but because they consumed the most hours relative to the judgment they required.

If you cannot articulate a specific, measurable objective for an AI tool before you buy it, you are not ready to buy it.

Stage 2: Analyze Processes

Map your workflows before introducing AI into them. This sounds obvious, but most organizations skip it entirely.

Process analysis means documenting how work actually moves through your team — from intake to delivery. Where do handoffs occur? Where does work queue up waiting for a single decision-maker? Where do attorneys spend time on tasks that a well-structured template or AI-generated first draft could handle?

We use a straightforward diagnostic: for every task in a matter lifecycle, we ask whether the task requires professional judgment, whether it requires human communication, or whether it is essentially information processing. AI excels at the third category, supports the first, and should generally stay away from the second without close supervision.

The firms that skip this step end up layering AI on top of broken processes, which produces faster broken processes.

Stage 3: Choose Technologies

Match tools to tasks, not to vendor marketing. The legal AI market in 2026 is saturated with platforms promising to transform your practice. Most of them solve some problems well and others poorly. No single model or tool is always right for every workflow.

The evaluation criteria that have served us well are straightforward. Does the tool flag uncertainty rather than fabricating answers? Can it process the document lengths our practice requires? Does it follow complex instructions reliably? Does the vendor's data handling meet our client confidentiality obligations? And critically — does it solve the specific problem we identified in Stage 1?

We maintain a formal vendor due diligence process for every AI tool we evaluate. That process has saved us from adopting tools that looked impressive in demos but could not meet our data security requirements or professional responsibility obligations in practice.

Stage 4: Integrate Systems

AI must plug into your existing infrastructure — not replace it. The most effective implementations connect AI capabilities to the practice management, document management, and communication systems your team already uses daily.

Integration failures are the silent killer of legal AI projects. A tool that produces excellent output but requires attorneys to copy and paste between three platforms will not get used. The test is simple: does the AI tool reduce the total number of steps in a workflow, or does it add steps? If the answer is the latter, the integration needs more work before rollout.

Stage 5: Train and Develop

Treat AI like a new hire. It needs onboarding, clear expectations, and ongoing supervision.

The most impactful investment we made was not in the AI tool itself but in the prompt engineering and system instruction infrastructure around it. Every AI interaction at FinTech Law begins with firm-specific instructions that define output standards, citation requirements, and professional responsibility guardrails. We maintain a prompt library that is versioned, documented, and continuously refined based on output quality.

Training also means training your people. Attorneys and staff need hands-on experience with the tools, not just a demo and a login. The firms that invest in building genuine AI competency across their teams — not just among the technology-curious partners — see materially better adoption and results.

Stage 6: Monitor and Evaluate

Measure what matters and refine continuously. The initial implementation is the beginning, not the end.

Track time-per-task before and after adoption. Monitor output quality. Pay attention to where attorneys override or substantially rewrite AI-generated work — those patterns reveal where the tool is underperforming or where your system instructions need refinement.

We conduct periodic reviews of our AI-assisted workflows with a simple question: is this tool still earning its place in the process? Not every tool that was useful six months ago remains the best option today. The AI landscape moves fast, and your evaluation process should keep pace.

Stage 7: Ethics and Compliance

This is the stage most organizations save for last, and that is exactly backwards. Governance belongs at the foundation — not the roof.

ABA Formal Opinion 512 has established that attorneys have an ethical obligation to understand the AI tools they use. The EU AI Act reaches full effect for high-risk systems in August 2026. The Colorado AI Act launches this summer. State bar associations are issuing guidance at an accelerating pace.

At FinTech Law, our AI governance framework operates on a three-phase model. Before any AI tool is deployed, we conduct vendor due diligence, establish system instructions, and calibrate risk posture by matter type. During use, structured prompts enforce citation, formatting, and reasoning requirements on every output. After output is generated, staff review is mandatory — no AI-generated work product reaches a client without a staff signing off.

We disclose our AI practices directly in client engagement letters. Clients receive information about which tools we use, how we protect their data, and their right to decline AI involvement in their matters. Transparency is not a liability. It is a competitive advantage that builds trust.

Where Most Organizations Stall

If this framework has a single lesson, it is this: most legal AI projects fail at Stage 1 and Stage 2, not Stage 3. Organizations rush to buy tools without first defining what problem they are solving or mapping the workflows the tools will enter. The result is expensive software sitting underused while the operational problems it was supposed to address remain untouched.

The second most common failure point is Stage 7 — not because firms do not care about ethics, but because they treat governance as an afterthought. Building compliance into your AI operations from day one is dramatically less expensive and disruptive than retrofitting it after a problem surfaces.

Key Takeaways

- Start with the problem, not the tool. Define measurable objectives and map your workflows before evaluating any AI platform.

- No single AI tool solves everything. Match technologies to specific workflow problems identified through honest process analysis.

- Integration determines adoption. If a tool adds steps to your attorneys' workflow rather than removing them, it will not get used regardless of its capabilities.

- Invest in infrastructure around the AI, not just the AI itself. Prompt libraries, system instructions, and training programs drive more value than the underlying model.

- Build governance first, not last. Ethics and compliance frameworks are cheaper and more effective when they are foundational rather than reactive.

The Bottom Line

Implementing AI in legal operations is not a technology project. It is an organizational design project that happens to involve technology. The firms and legal departments that approach it with the discipline of a structured framework — defining objectives, mapping processes, selecting tools deliberately, and building governance into the foundation — will outperform those that simply buy platforms and hope for the best.

This is what we mean by legal engineering. Not adopting tools. Redesigning how legal services are delivered.

If your firm or legal department is building an AI implementation strategy, we can help you get it right from Stage 1.

Up next in the Legal Engineering Series — Part 4: Why Your Legal AI Needs Guardrails, Not Just Prompts.